In October 2025, a routine bankruptcy proceeding in Montgomery, Alabama, became a national headline about artificial intelligence, ethics, and human error.

At the center was Gordon Rees Scully Mansukhani LLP, a massive U.S. law firm with more than 1,800 attorneys — and one of its lawyers, Cassie Preston, who represented a creditor in the Jackson Hospital & Clinic, Inc. Chapter 11 case.

During the case, a court filing prepared with generative AI included fabricated and misquoted legal citations — the kind of errors that AI tools sometimes produce, known as hallucinations. U.S. Bankruptcy Judge Christopher Hawkins spotted the inconsistencies and ordered Preston and the firm to explain themselves. What followed was both sobering and deeply human.

How It Happened

In her official Response to the Court’s Order to Show Cause, Preston admitted she knew that AI had been used to draft the motion but was not truthful at the initial hearing due to fear and embarrassment. She said the AI assistance did not come from a firm associate, and she later withdrew the motion.

“I allowed my loyalty and desire to help a friend override my better judgment,” Preston wrote, explaining that she had taken on too much work while enduring personal hardships. “I can only ask the Court to show mercy.”

Reuters reported that Gordon Rees called the incident “profoundly embarrassing” and has since implemented stricter AI education, training, and citation-checking policies. The firm also agreed to pay over $55,000 in legal fees to other parties affected by the AI errors.

Why It Matters

Generative AI, like ChatGPT and similar tools, can produce polished text that appears convincing — even when it’s wrong. In law, that’s dangerous. Every case citation, statute, or precedent must be verifiable. AI hallucinations can mislead judges, waste hours of opposing counsel’s time, and erode trust in the legal system.

This case underscores a growing challenge across professional fields: AI tools are powerful, but they cannot replace human responsibility. Lawyers, doctors, and engineers are quickly learning that automation requires constant oversight.

For Non-Lawyers: How Generative AI “Hallucinates”

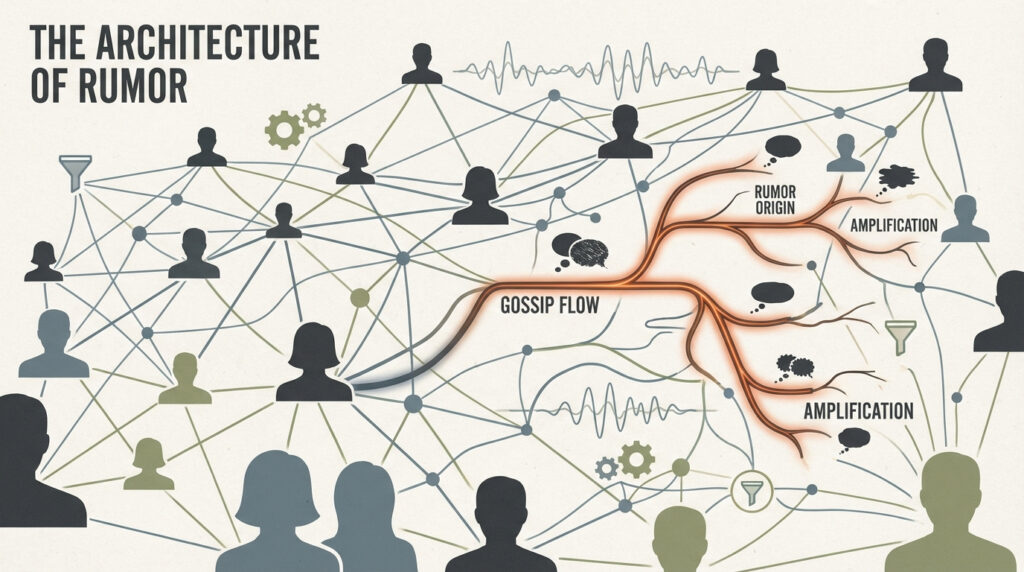

Imagine asking a very confident person to explain a topic they don’t fully understand. Instead of admitting “I don’t know,” they make up something that sounds right.

That’s what AI hallucinations are — confidently stated but incorrect information. The machine isn’t malicious; it’s simply predicting what words look right, not what’s factually true.

When lawyers use AI without double-checking, the system might cite fake cases, misquote real rulings, or invent nonexistent judges. In a courtroom, that’s catastrophic.

Why It’s Still Cool (and Inevitable)

Despite the risks, AI in law has huge potential. It can summarize massive records, spot patterns in rulings, and even suggest strategies — saving hundreds of hours. When used responsibly, it becomes a copilot for human expertise, not a replacement.

The Gordon Rees episode serves as a wake-up call, not a death sentence for AI in law. The technology just needs guardrails — and perhaps a bit more humility from those who wield it.

What’s Next

Courts across the U.S. are now issuing AI disclosure requirements, and law schools are adding AI ethics courses to their curricula. Many firms are implementing “AI review protocols” — mandatory human verification before AI-assisted documents are filed.

For the public, this case shows that even in high-stakes settings, AI is only as reliable as the humans behind it. As one observer put it: “Artificial intelligence still needs real intelligence to keep it honest.”

Check out the cool NewsWade YouTube video about this article!

Sources:

Merken, S. (2025, October 24). Large US law firm apologizes for AI errors in bankruptcy court filing. Reuters. https://www.reuters.com/legal/litigation/large-us-law-firm-apologizes-ai-errors-bankruptcy-court-filing-2025-10-24/

Preston, C. (2025, October 23). Cassie Preston’s Response to Order to Show Cause. Case No. 25-30256-CLH, United States Bankruptcy Court for the Middle District of Alabama.