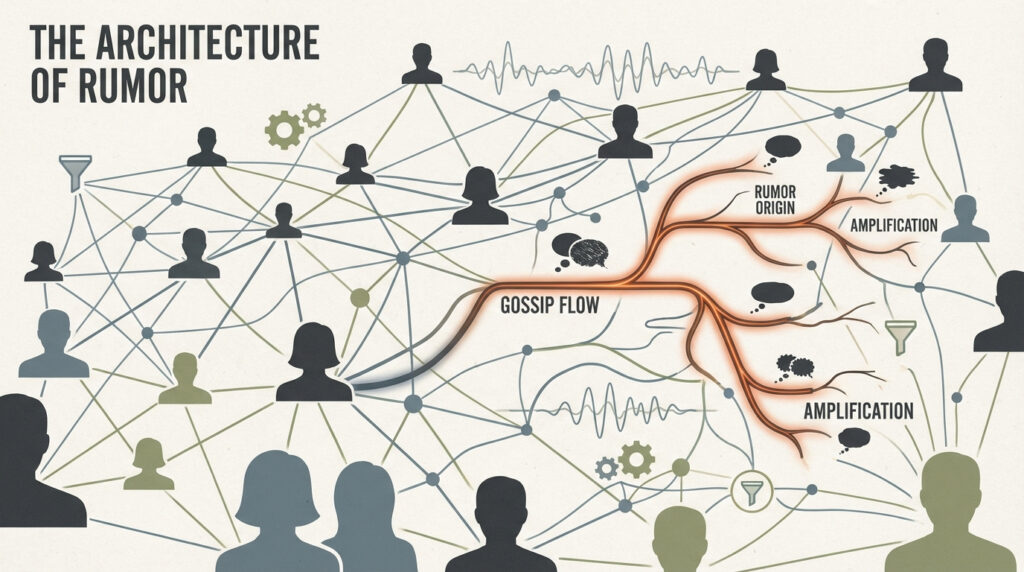

Can an AI chatbot change your mind about a conspiracy theory? According to a new PNAS Nexus study from researchers at MIT and the University of Regina, the answer is yes — and surprisingly, it doesn’t even matter if you think the AI is human.

This discovery challenges decades of psychology research claiming conspiracy beliefs are nearly impossible to shift. Instead, it shows that calm, fact-based conversations — not shouting matches or social shame — can actually open people’s minds.

What the Researchers Did

Esther Boissin and her team recruited 955 volunteers who believed in either a conspiracy theory (like chemtrails or election tampering) or another epistemically unwarranted belief (like pseudoscientific health myths). Each participant chatted with GPT-4o, the same model that powers modern AI assistants.

Some participants were told they were talking to an AI, while others were told they were chatting with a human expert. In both cases, the model calmly discussed the participant’s belief, offering counter-evidence and logical reasoning to gently challenge it.

The twist? Whether participants thought the messenger was AI or human made no difference. Both groups reduced their belief strength by about 10 percentage points for conspiracies and 6 points for general pseudoscientific ideas.

Why It Matters for Science People

From a cognitive-science perspective, this finding is revolutionary. It suggests that belief correction doesn’t require who is speaking — only what is being said and how it’s delivered. The LLM’s persuasive power stems from targeted, evidence-based dialogue, not social status or emotional appeal.

This contrasts with earlier theories emphasizing “motivated reasoning” — the idea that people cling to falsehoods because admitting error threatens their identity. Instead, the data reveal that even entrenched beliefs are malleable when the counter-evidence is personalized and non-confrontational.

Why It’s Cool for Everyone Else

Imagine being able to talk through your most controversial ideas without being judged, interrupted, or insulted. That’s what the participants experienced. The AI didn’t shame them — it reasoned with them.

This makes sense: unlike humans, AI doesn’t come across as partisan, sarcastic, or emotional. People don’t feel the need to defend their group or social identity. The result? Real, reflective thinking.

Even when users thought they were chatting with a real person, the impact didn’t fade. That means the magic isn’t in the robot — it’s in the reasoning.

The Big Picture

AI might soon become one of humanity’s best tools for countering misinformation. Instead of fighting falsehoods with bans or labels, future AI systems could guide people through self-reflection and evidence-based reasoning—at scale.

That doesn’t mean human experts are obsolete. It means that when AI and humans both use patient, well-informed dialogue, truth actually has a chance to compete with conspiracy.

What’s Next

The researchers note that their study used English-trained models, meaning cross-cultural testing is needed. Future work may explore how localized AI models debunk falsehoods in different languages, cultures, and belief systems.

But one thing is already clear: talking to AI can make us more rational—not because we trust machines, but because the machines help us think.

Check out the cool NewsWade YouTube video about this article!

Article derived from: Boissin, E., Costello, T. H., Spinoza-Martín, D., Rand, D. G., & Pennycook, G. (2025). Dialogues with large language models reduce conspiracy beliefs even when the AI is perceived as human. PNAS Nexus, 4(11), pgaf325. https://doi.org/10.1093/pnasnexus/pgaf325