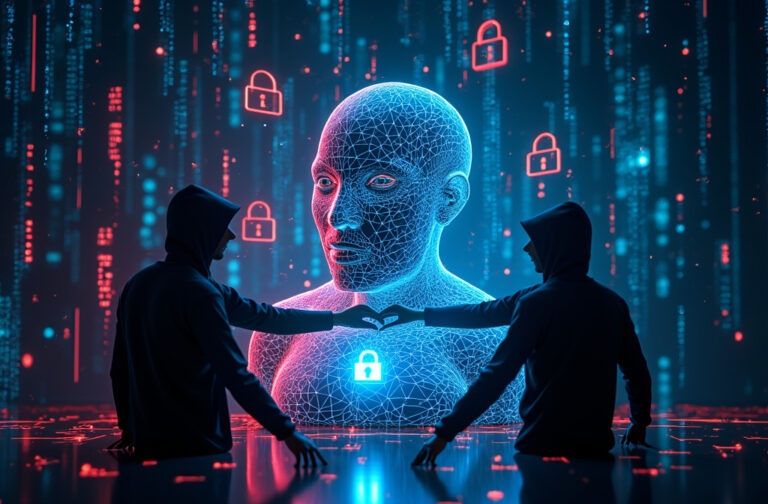

The AI Alignment Paradox: Can We Really Control AI Without Inviting Manipulation?

The AI Alignment Paradox highlights a critical issue in AI safety—while aligning AI with human values is essential, doing so makes it easier for adversaries to manipulate it. As AI becomes more predictable in following ethical constraints, attackers can exploit these rules to bypass safeguards. This blog post explores the risks, real-world implications, and potential solutions to balancing AI security and alignment. How do we ensure AI remains ethical without making it vulnerable? Read on to explore this paradox and its impact on AI’s future.