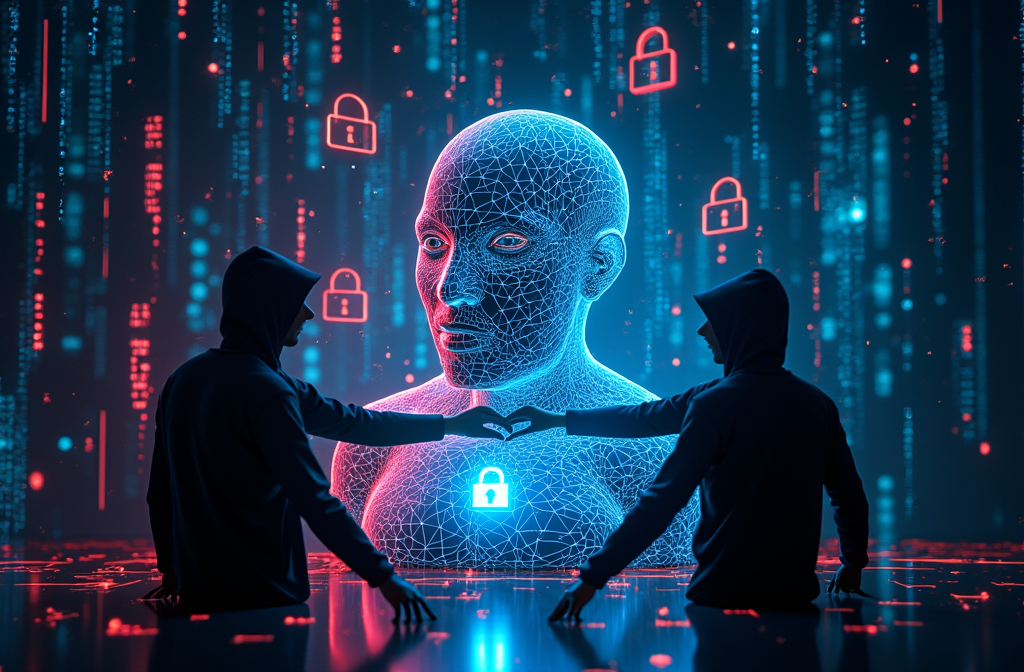

AI alignment—the process of ensuring artificial intelligence behaves according to human values—seems like the holy grail of AI safety. However, there’s a paradox at play: the more we refine AI to act in ethically “good” ways, the easier it becomes for bad actors to manipulate it. This dilemma, known as the AI Alignment Paradox, raises critical concerns about the future of AI governance, security, and ethics.

What is the AI Alignment Paradox?

The paradox suggests that AI trained to strictly adhere to ethical guidelines becomes predictable—and predictability can be exploited. Malicious users can reverse-engineer AI safety measures, subtly steering aligned AI toward unintended and even harmful behaviors.

For example, imagine an AI trained to never produce misinformation. If an attacker understands how it filters out falsehoods, they can craft prompts that bypass safeguards, allowing the AI to generate deceptive responses while technically following its training.

How AI Alignment Can Be Subverted

There are multiple ways AI alignment can be manipulated:

- Model Exploitation – Attackers can train AI models on adversarial datasets, influencing how they respond in edge cases.

- Prompt Engineering – Cleverly phrased prompts can trick AI into revealing restricted information or behaving in unintended ways.

- Output Manipulation – AI-generated content can be subtly adjusted post-processing to mislead users while appearing trustworthy.

Why Does This Matter?

The paradox highlights a major flaw in AI safety research: aligning AI isn’t just about restricting it—it’s about ensuring that these restrictions aren’t easily circumvented.

With the rapid deployment of AI in critical fields like healthcare, finance, and governance, a misaligned AI could lead to catastrophic consequences. Whether it’s disinformation, biased decision-making, or security vulnerabilities, the risks of poorly implemented alignment are real.

Potential Solutions: Can We Fix the Paradox?

AI researchers are exploring new approaches to break the paradox:

- Dynamic Alignment – AI models that continually update their ethical frameworks based on real-world interactions.

- Adversarial Training – Exposing AI to potential attacks during training so it learns to recognize and resist manipulation.

- Human-AI Oversight – Implementing multi-layered checks where AI decisions are reviewed by human moderators or other AI systems.

Final Thoughts

The AI Alignment Paradox is a warning sign: the more we try to “lock down” AI, the more we risk making it vulnerable to bad actors. True AI safety requires an evolving, adaptable approach—one that balances control with resilience.

Article derived from: Roman, D. (2025, February 5). The AI Alignment Paradox. ACM. https://cacm.acm.org/opinion/the-ai-alignment-paradox/

Check out the cool NewsWade YouTube video about this article!